We gave Claude the same 8 financial research tasks twice.The data source made all the difference.

May 2026•Bigdata team

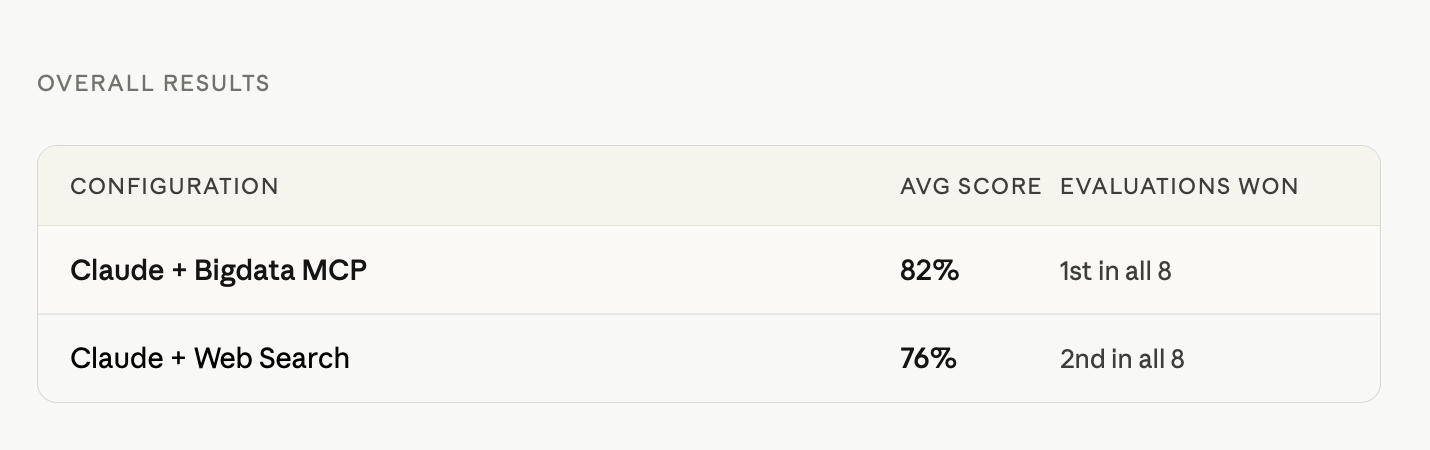

Same model, same prompts: once using web search, once using structured financial data via Bigdata.com MCP. We evaluated 16 reports across 7 metrics. Here’s what stood out.

The eight tasks covered the core workflows of institutional equity research: two earnings previews (Eli Lilly), an earnings digest (Home Depot), a company brief (Reddit), a risk assessment (Tesla), a scenario analysis (Figma), a variant perception (AMD), and a macro-sector brief. Every task ran twice on identical prompts, once with Claude using web search only, once with Claude connected to Bigdata.com's financial data infrastructure via MCP. Reports were evaluated independently before cross-comparison.

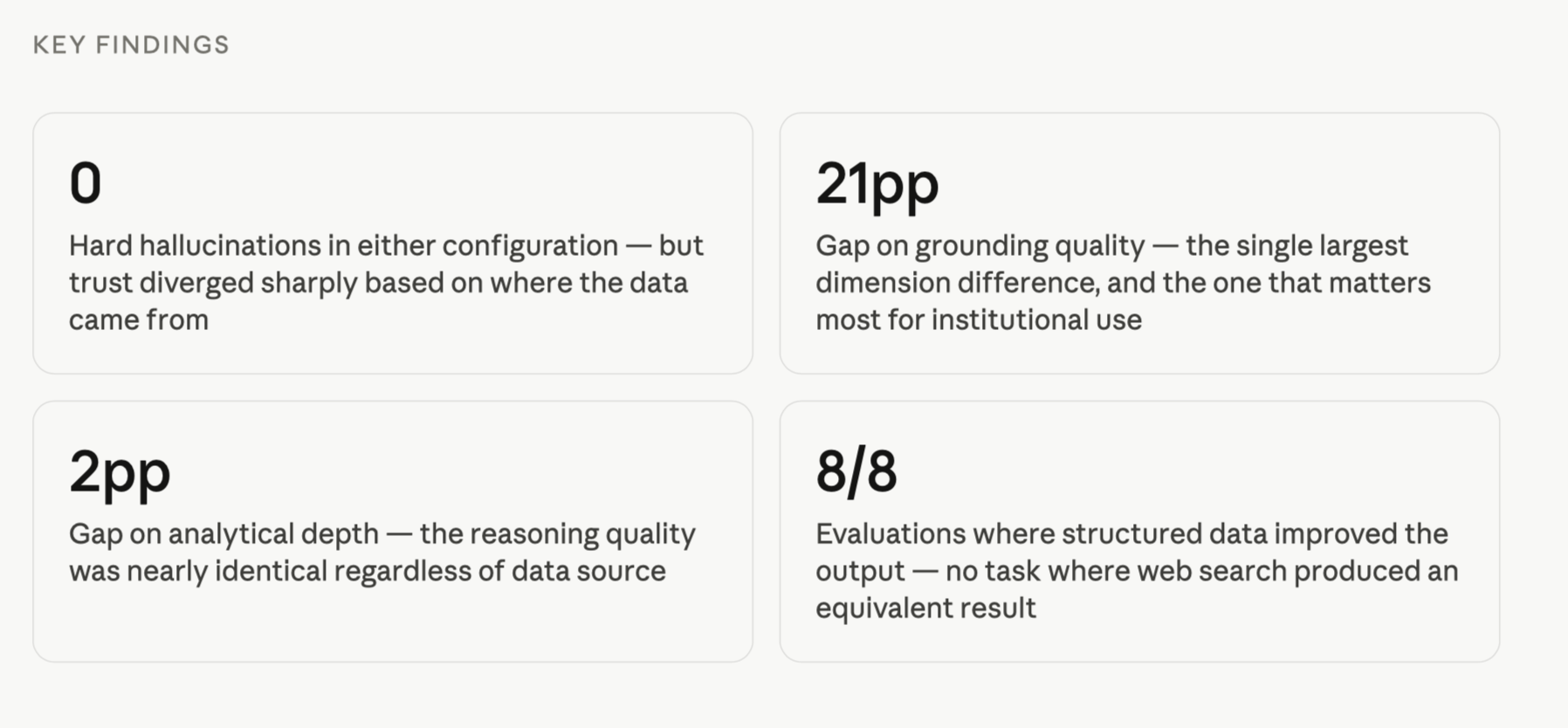

Claude with Bigdata MCP won all 8 evaluations. The gap was 6 percentage points on composite, but 21 points on the dimension that matters most for institutional trust.

A 6-point composite gap may look modest but it isn’t, especially once you see which dimensions are driving it. The model's reasoning quality was nearly identical. The evidence pipeline was not.

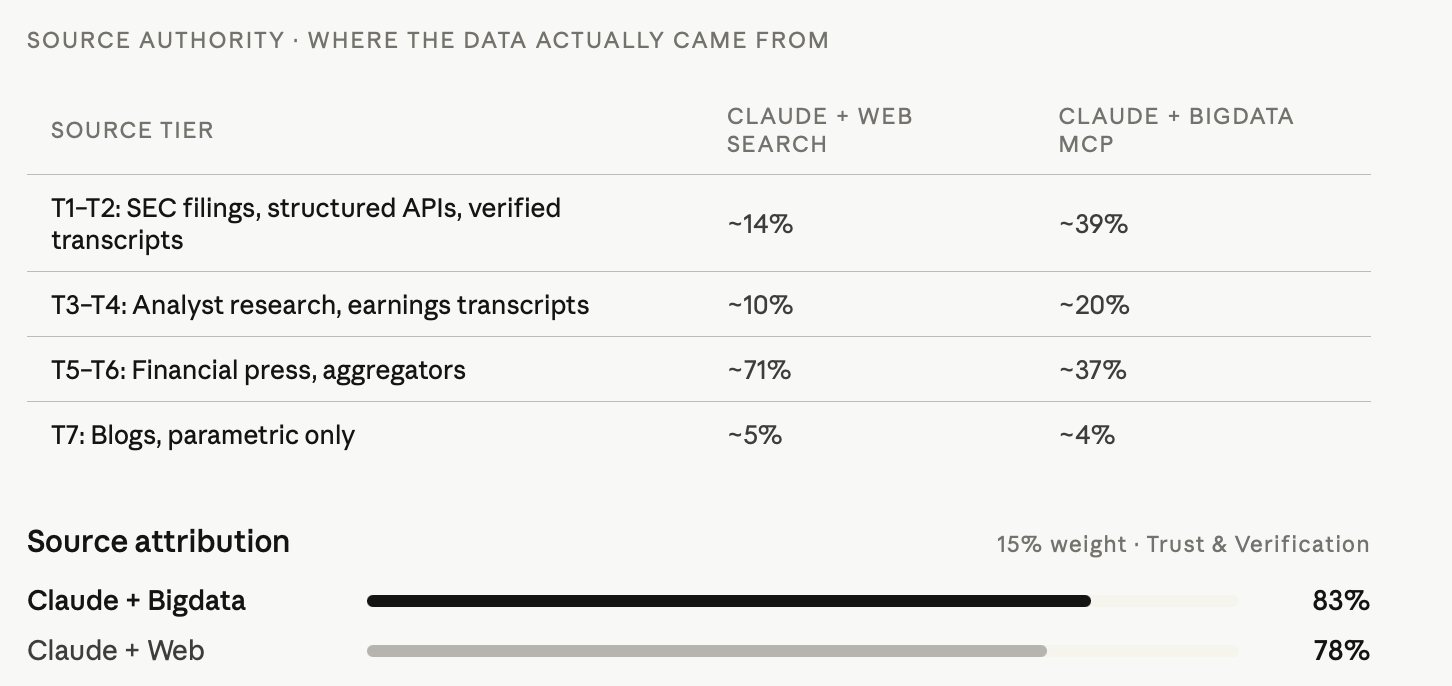

The widest gap in the evaluation (21 points) and the dimension with the highest variance across all 8 tasks. Grounding quality measures the provenance of the data, not just whether a source is cited. With web search, roughly 63% of financial figures came from general media aggregators. With Bigdata MCP, roughly 38% came from SEC filings, structured APIs, and verified transcripts, the same sources a Bloomberg terminal would use. The gap isn't about how many sources are cited. It's about how far the data has traveled before it reaches the report.

Attribution rates were high on both sides: 92% for Bigdata-powered reports, 89% for web search. Claude with web search was disciplined about citation even without structured data access. The 5-point gap reflects not just attribution coverage but traceability quality: a Quartr earnings transcript citation is more auditable than a Benzinga summary of the same call, even if both are properly cited.

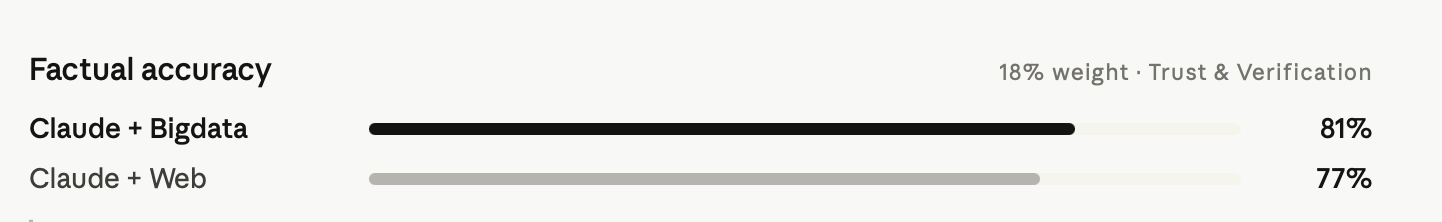

Zero hard hallucinations in either configuration, a strong baseline result. The 4-point gap instead reflects precision: vague revenue ranges, imprecise market share figures, and figures that passed through a journalist's interpretation before landing in the report. Structured data APIs return deterministic, verifiable numbers. Web search introduces a longer provenance chain where rounding, paraphrasing, and editorial framing accumulate.

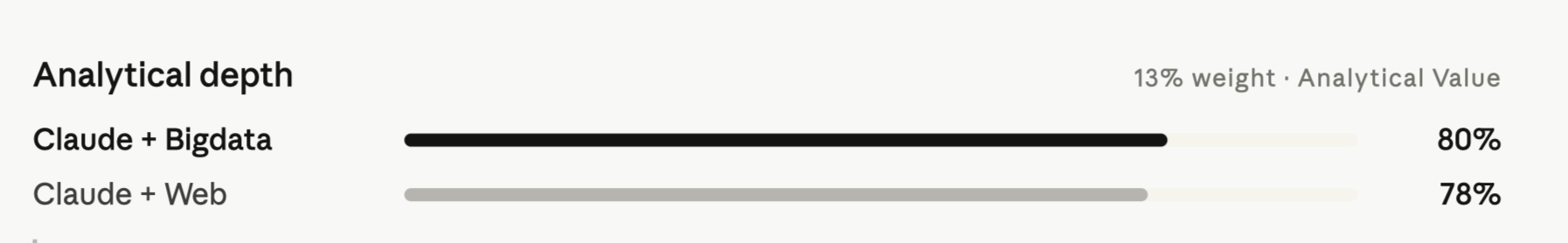

The closest dimension in the entire evaluation, just 2 points separating the configurations. Claude's analytical reasoning quality held up regardless of data source. In the AMD variant perception, the web-search report produced the sharpest variant articulation of any report in that evaluation, even as it fell behind on grounding by 26 points. The reasoning engine is not the bottleneck. The data pipeline is.

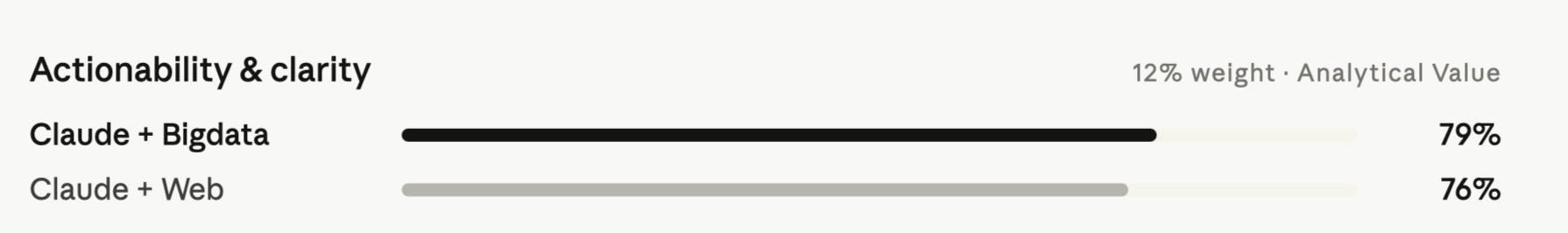

Actionability requires more than good analysis, it requires the data to support it. The Bigdata-powered Tesla risk assessment included a structured risk matrix with current status, direction, and impact for 10 risk factors. The web-search version covered the same themes but couldn't provide the institutional fund flow data, ESG governance scores, or same-day equity levels needed to make each risk factor concrete and calibrated.

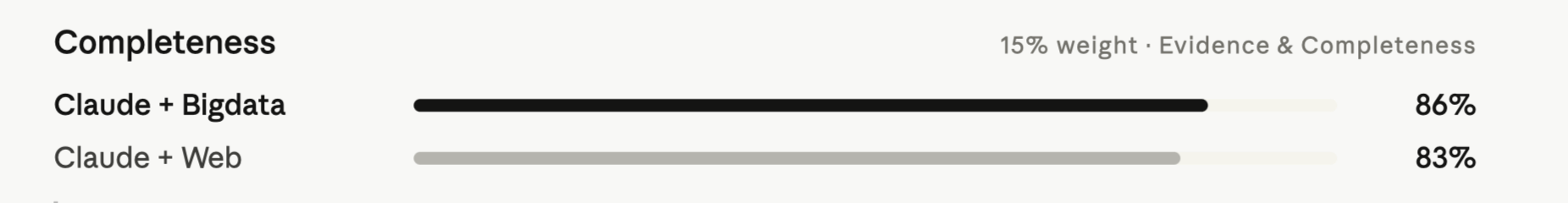

Both configurations covered required sections well - Claude's structured reasoning applies regardless of data source. The 3-point gap shows up at the margins: fund flow intelligence, institutional holder counts, ESG scoring, hiring trend data, and earnings transcript citations only appeared consistently when the structured data layer was present. These aren't decorative, as institutional ownership shifts and transcript citations are directly relevant to investment decisions.

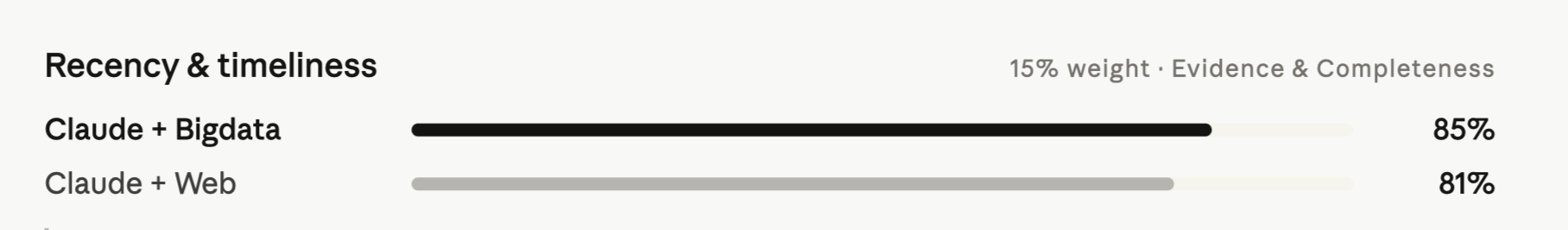

Both configurations were broadly current, but the Bigdata connector's structured earnings calendar and same-day price data gave it a consistent edge on timeliness. The macro brief evaluation was the clearest example: the Bigdata report incorporated the February NFP print (-92K vs +59K expected), the VIX spike to 29.49, and the Iran-driven oil price surge, all released on the same day as the report. These weren't supplementary details; they were the day's market-moving events.

Bottom line

The model's analytical capability is not what differentiates these reports. Claude reasons well regardless of the data source. What changes is whether the evidence pipeline can support that reasoning at institutional standards.

Even the top-scoring configuration averaged 82%, below the 90%+ threshold that defines institutional-grade research. The remaining gap is structural: no AI configuration in this evaluation modeled a quarter, built a revenue bridge, assigned probability weights to scenarios, or produced a DCF. Those capabilities represent the next frontier. The data infrastructure is a prerequisite for getting there, not the destination.

To go deeper, check out our Docs space for side-by-side evaluations comparing AI-generated financial reports using Bigdata MCP tools versus web search.